When building a User Interface (UI) for a HoloLens 2 app in Unreal Engine, you don’t have to start from scratch. You can use some of the building blocks from Microsoft’s Mixed Reality Toolkit.

This example is from the development of AnatoMe, an experimental project to teach students about human anatomy through an interactive hologram. Further details can be found on the AnatoMe development page or other development related pages below:

- AnatoMe development

- Building a UI for a HoloLens 2 app (you are here!)

- Using the Variant Manager to control an AR scene

- Adding tooltips in Augmented Reality

Microsoft Mixed Reality Toolkit – UX Tools

In order to assist Unreal Engine developers targeting the HoloLens 2 device, Microsoft has built a Mixed Reality Toolkit or MRTK for short. Included in this toolkit is a set of plugins called ‘UX Tools’ that allow you to build menus that are optimised for a HoloLens 2 experience. These include ‘Near Menus’ that can follow the user or be grabbed and moved or pinned to another virtual location in real-time. When the project is tested within the Unreal Engine editor, a pair of simulated blue hands can be controlled using the keyboard allowing the hands and fingers to interact with the menus for testing purposes.

Once downloaded and activated as a plugin, a set of tools and examples become available. One set of menus are the ‘Near Menus’ that contain a number of buttons with icons and text labels. The menus have a ‘pin’ toggle button that controls a UxtFollowComponent that allows the menu to either follow the user or remain in a fixed position in world space. This versatile menu seemed the ideal place to start for this project.

Customising the Menu

The initial menu requirement was to include four buttons that would be used to switch between views of bone, blood vessels, muscle, and skin (but leave enough room for two additional buttons for future functionality if required). To do this, I selected the buttons to remove and changed their Child Actor Component to None. To edit the labels of the remaining buttons, I needed to expand the Child Actor Template and under Uxt Pressable Button change the Label text.

The button icons can be changed under Icon Brush > Edit > Icon brush editor and icons that could be interpreted as four different layers were selected. I then continued to test the menu in the editor as it was being built. The buttons could be interacted with, but they were mutually exclusive and numerous buttons could be depressed at the same time which did not match the required functionality.

In order to resolve this, I needed to create a toggle group that all four buttons would belong to. I added the UxtToggleGroup component and then edited the Uxt Toggle Group settings.

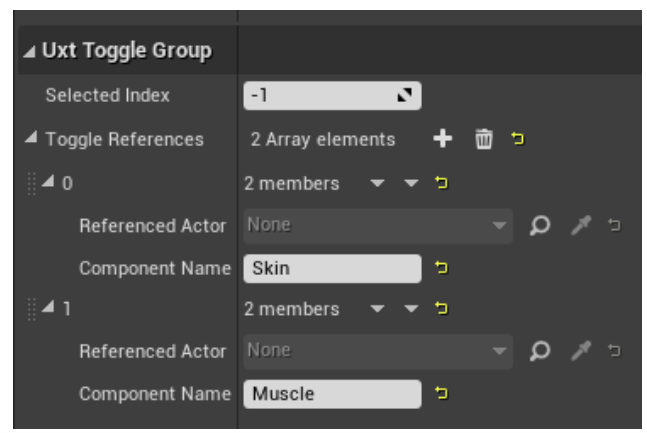

The toggle group references an array that can be created manually using the group settings. Once a new ‘member’ is added, you can add the Component Name. This is a string value, so accuracy here is key and if incorrect, could be a troublesome bug.

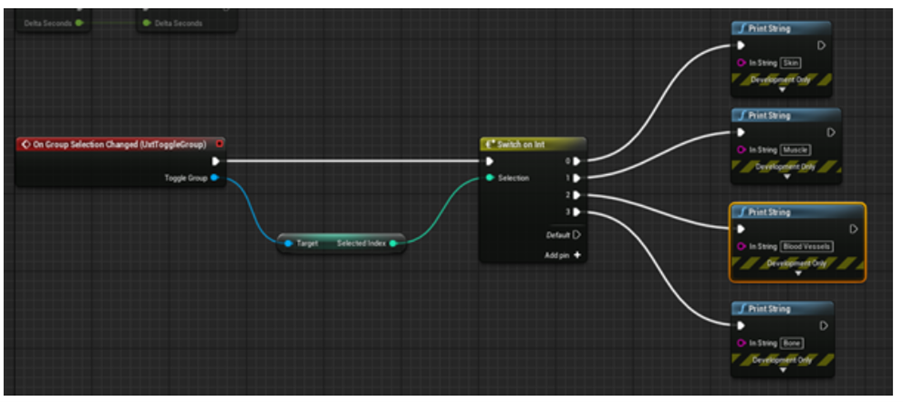

Once the toggle references were added, only a single button could be active at a time. The next task was to test that the buttons could trigger independent events. To set up events from buttons in a group, I had to select the UxtToggleGroup component and click the first event in the properties: On Group Selection.

I then used a Switch on Int element in my blueprint to print a string when each button was pressed. During the test, each button sent out the appropriate string value when pressed.

Summary

User input will can be achieved through the use of ‘Near Menus’ from the UX Tools plugin. These follow the existing design patterns of Windows Mixed Reality applications and will be intuitive for the end user. This customisable and extensible menu will be useful as development progresses and if additional functionality is required.

Next steps

Configure the menu buttons to events that trigger the appropriate Variant Manager changes.

- AnatoMe development

- Building a UI for a HoloLens 2 app (you are here!)

- Using the Variant Manager to control an AR scene

- Adding tooltips in Augmented Reality