Background

AnatoMe is an experimental project that aims to teach students about human anatomy through an interactive hologram! The initiative was a joint venture funded by USC and Griffith University that included the purchase of a Microsoft HoloLens 2 device. My role in the project was to design and build the prototype that met these initial requirements:

Build a ‘Proof of concept’ multi-level simulation of a human arm using Augmented Reality. Produce an interactive model of an arm from hand to elbow, that when activated, can switch between levels of detail (such as skeletal, blood vessels, muscle, and skin).”

Watch the video for a quick overview of the project or review the development notes below.

Design

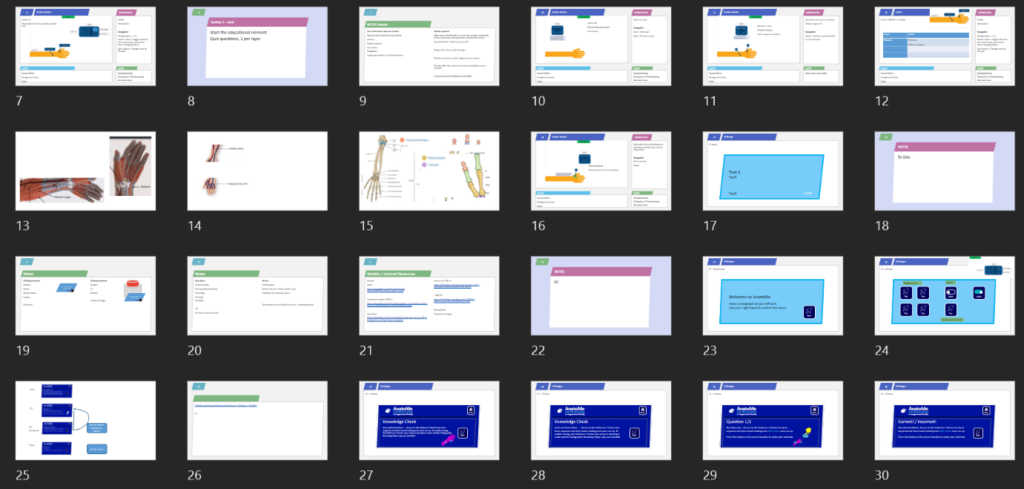

Once the initial design brief was discussed with stakeholders, work started on a storyboard to plan a series of prototypes to seek solutions to set user problems. While initial development tests were carried out, the storyboard was developed further with input from the USC anatomy lecturer regarding ideal body parts to showcase in the app.

Development setup

In order to start developing a HoloLens 2 app, a number of software applications and tools needed to be set up and configured. This included the Windows environment, Microsoft’s Visual Studio, and Unreal Engine’s configuration and plugins. Full details of the development environment setup can be accessed here.

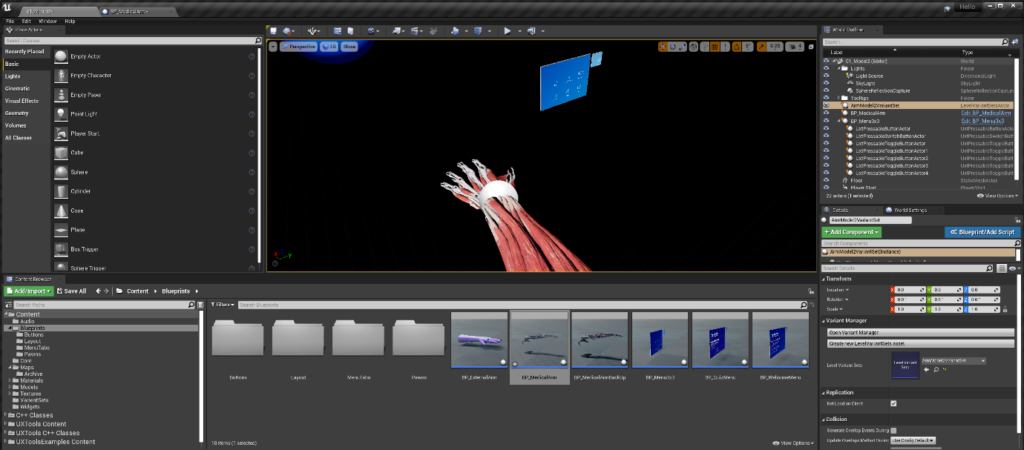

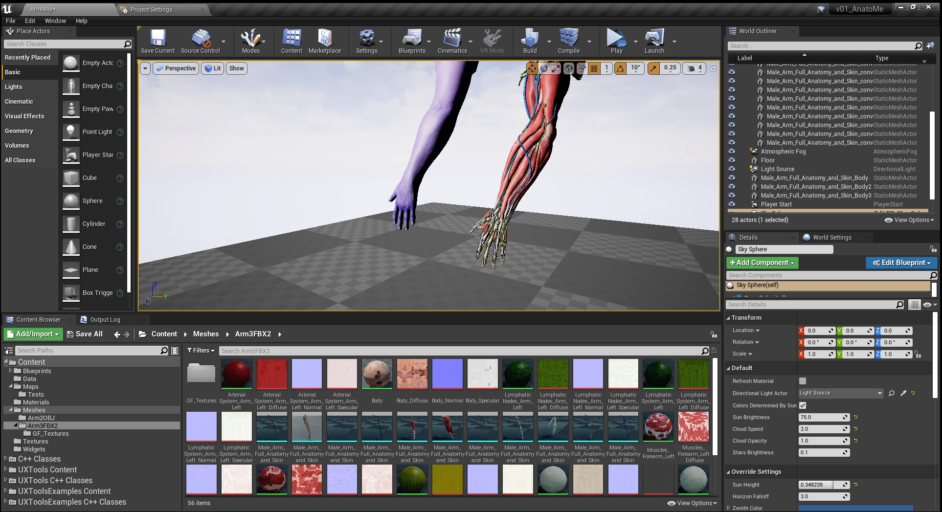

The software used in the development of the AnatoMe app was Unreal Engine and 3DS Max. Unreal Engine is primarily a 3D game engine that can also be used for product visualisations, interactive training, and simulations. It’s an ideal tool for HoloLens 2 development as Microsoft has developed a Mixed Reality Toolkit that can assist with some common design requirements.

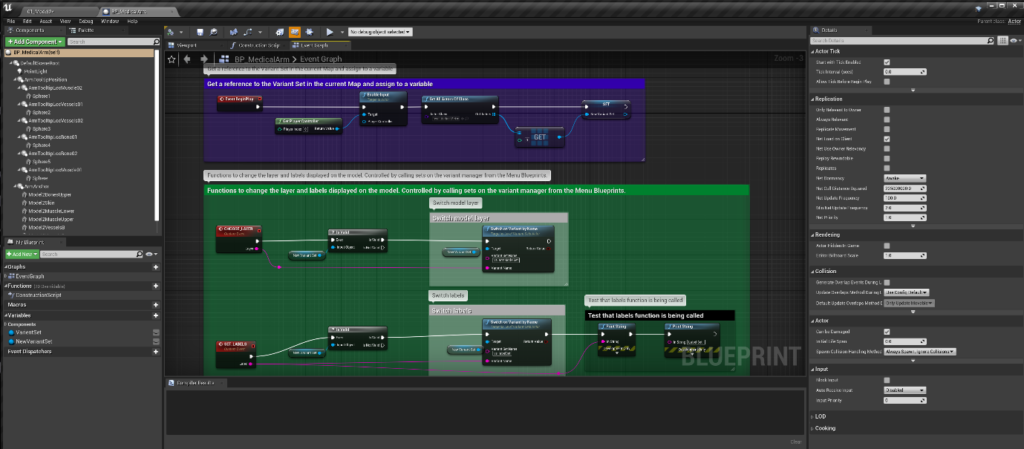

Unreal Engine allows you to work in 3D space and program your project using either C++ code or Blueprint visual scripting.

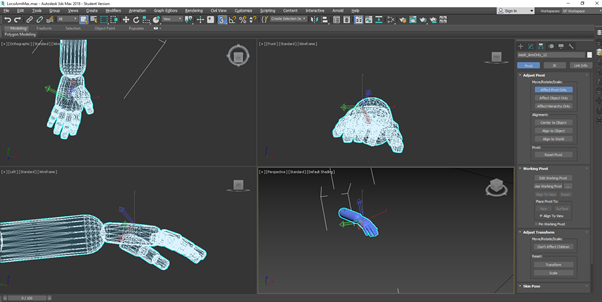

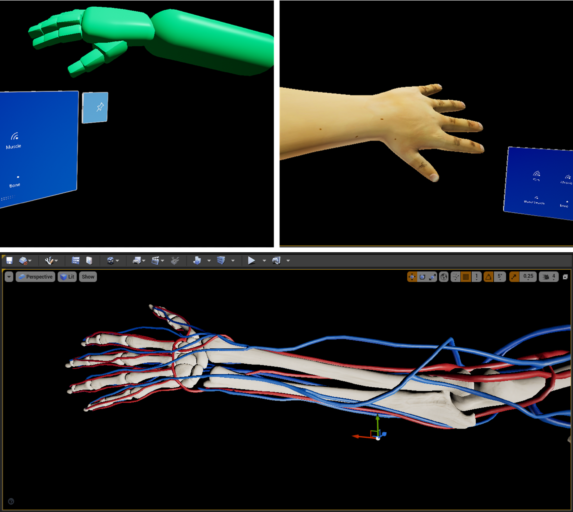

3DS Max is a 3D modelling tool that enables you to create or edit 3D models. These 3D models are then imported into Unreal Engine and used as part of the app being built. This tool was used to modify existing low-poly models so they matched the requirements of the project.

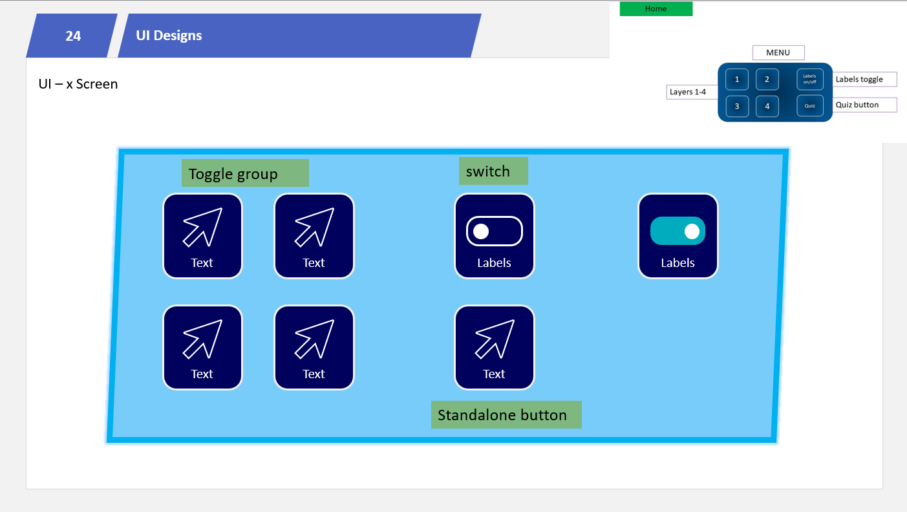

User Interface (UI) for Augmented Reality

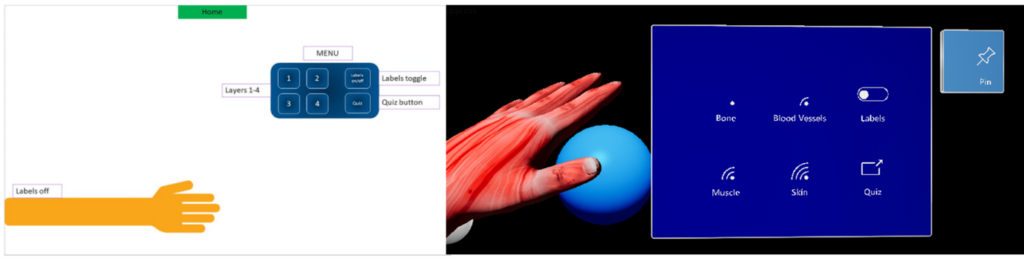

Utilising existing design patterns for the HoloLens 2, the UX Tools plugin for Unreal Engine provided a set of building blocks for UI development. This included a number of customisable menus and buttons. Before building these interfaces in Unreal Engine, they were storyboarded to design menu layout and functions.

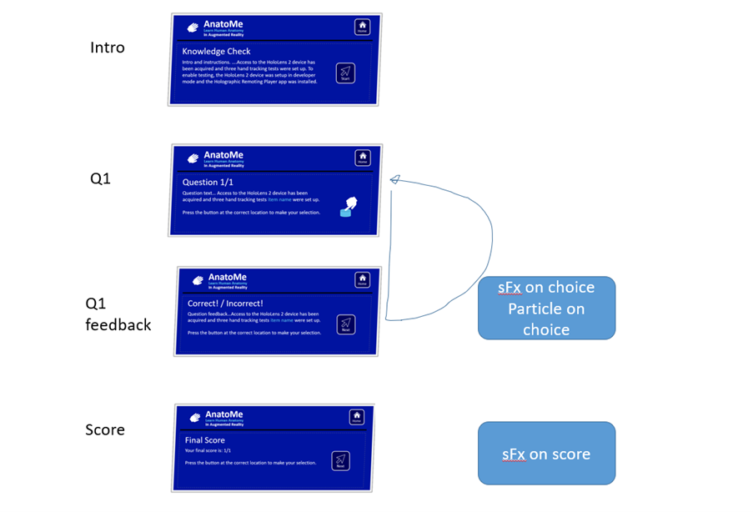

For the quiz menu, the layout and flow of the user experience was also storyboarded to include question and feedback loops.

To view development notes on how the User Interface was built, visit Building a UI for a HoloLens 2 app.

3D Models

The model for the hologram of the user’s arm went through several iterations from low-poly, to mid-poly, and finally a high-poly model. The early models were used to test functionality and provide an early prototype (or Minimum Viable Product – MVP) for stakeholders and subject matter experts to review. The image below shows the three stages of model use in the project.

The final model used was a realistic and anatomical accurate model that met the educational requirements of the project. This model included various meshes that provided the views of skin, muscle, blood vessels, and bone.

The Variant Manager

The Variant Manager is a Plugin for Unreal Engine that provides a visual dashboard for controlling the objects in your scene. This powerful interface was used to manage the different views of the arm. Initial experiments of changing the low-poly arm’s material is displayed in the animated gif below.

Further use of the Variant Manager included the swapping the meshes of the hi-poly model and automatic display of relevant tooltips using the ‘dependencies’ functionality. More information on this element of the project can be viewed on Using the Variant Manager to control an AR scene.

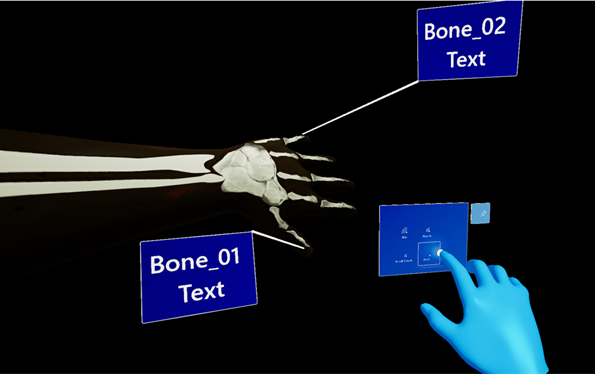

Tooltips

The UX Tools plugin from of Microsoft’s Mixed Reality Toolkit includes an optimised version of tooltips. This allows you to add labels in 3D space on your models, which fitted in well with the educational needs for this HoloLens 2 app.

Once the tooltips were put in place, their visibility could be controlled via the UI menu. For details on how this feature was implemented, view the Adding tooltips in Augmented Reality page.

Tracking

Once the HoloLens 2 device was acquired for the project, I worked with the device’s hand tracking features. One test included drawing debug lines at the bone location of the user’s hand. As the hand moves, the visual marker for each bone follows the bone’s position. This test confirmed that the hand movements and bone positions were being tracked successfully and could be referenced in Unreal Engine. Some video clips of the tracking tests are provided below.

Development challenges

The project started without the physical HoloLens 2 device, with early prototyping being restricted to a simulated view inside Unreal Engine using the UX Tools plugin. However, a number of successful UI and interactive developments were achieved before the arrival of the hardware. Once the device was available, two key elements of the development pipeline were established. Firstly, the ‘Holographic Remoting Player’ was installed on the HoloLens 2 device, enabling the streaming of a current scene from within Unreal Engine directly to the device without the need to publish, enabling rapid iterative development where changes could be viewed on the device immediately. Once this was established, the longer process of publishing to the actual device was set up which provided further insight into how the app would perform.

Summary

A prototype of the AnatoMe app has been published to the physical HoloLens 2 device. This is available as a pinned app on the main menu and now uses the new high quality anatomy model with four interchangeable meshes for skin, muscle, blood vessels, and bone. Tracking of the user’s hand movement has been fixed to the user’s wrist, with the model following the user’s position and rotation at this point. Additional functionality has been developed in the form of a quiz, with a separate menu providing instructions and questions for the user. Feedback during the quiz has been added in the form of sound effects and voiceover narration from the anatomy lecturer.

An overview of the project can be viewed in the video below.

Additional development notes for this project can be found via the links below.

- AnatoMe development (you are here!)

- Building a UI for a HoloLens 2 app

- Using the Variant Manager to control an AR scene

- Adding tooltips in Augmented Reality